📖 Permutation City is a “hard Sci-Fi” novel published in 1994. In the book, the human kind develops the capabilities of scanning human brains and then simulating them on computers.

Consciousness of a digital mind

If we managed to simulate the workings of a human brain (in all its complexities, electrical and chemical), would the resulting computer program be … conscious ?

- if consciousness is somehow tied to the core biological, chemical and physical substance of the brain, then no, the computer program would not be conscious. On the other hand …

- if consciousness is an emerging result of processing information in some specific patterns (that happen to occur in a human brain, and would be reproduced in the simulation), then yes, the program would be conscious

Rocks in the desert (substrate independence)

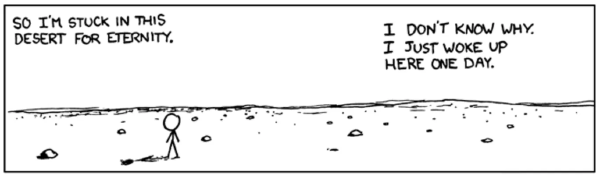

A Bunch of Rocks, XKCD #505 ↪

Here’s a slightly unsettling realisation: if a computer program can be “conscious” (have subjective experience) purely as a result of the way it processes information, then it should not matter on what “hardware” it runs.

It could be run on 100 different computers. It could be run out of order (one computer computing a transition from state 6 to 7, then another one computing the step 2 to 3). It could be run using a bunch of rocks on an infinite desert slowly rearranged by an immortal and very patient dude (see this XKCD at your own risk).

None of this would be perceptible to the person being simulated. This idea is called “substrate independence”.

The dust theory

In the book, wealthy aging people scan their minds and run them on computers to escape death. But this doesn’t really buy them immortality: they will only be running as long as the computers they run on are not switched off. Is there a solution to this problem?

The characters of the book look for it in the dust theory. It’s a mind-bending idea (which is why it took an entire novel to try to explain it), but it boils down to this:

- if it doesn’t matter how the computation of the simulation is performed (substrate independence), it may be sufficient that each step of the computation is represented somehow, at some point, somewhere in the vast complexity of the universe

- if the universe is very big and chaotic, we may expect that every step of the simulation does “find itself” represented in the dusty particles (or rocks in the desert) of the universe somewhere

Therefore, it may be enough to configure or start the simulation to ensure it will run and keep running indefinitely, without any computers needed to sustain it.

Conclusion

Permutation city bent my mind a little.