Artificial intelligence is a very broad field. Every time a machine performs a task associated with human intelligence, it’s “artificial intelligence”. Composing text, recognizing what’s in a picture, producing speech, driving a car, planning a trip, all of these and more are examples of AI.

This diversity of applications used to be matched by a diversity of computing techniques. Each domain would develop their own methods, suitable for the particular challenges and goals. This is increasingly no longer the case, as neural networks are adapted as the method of choice across a broad array of applications.

Let’s take a look at the types of problems we now solve with neural networks, and take a nostalgic look at the older methods they’re replacing.

Domains

🏭 Industrial quality inspection

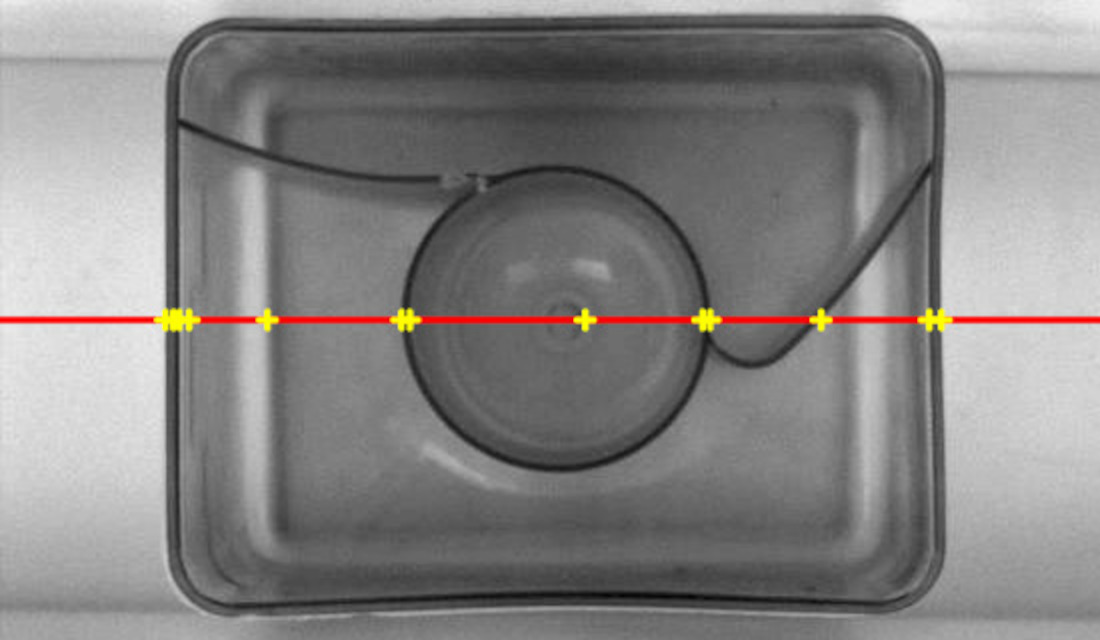

In the summer of 2012, I was working at a cool Polish startup developing factory inspection systems. We were using cameras to find broken products on the assembly line.

As the lead of the algorithms team, I was building specialised solution for each use case. To check photos of dishwasher capsules, I’d detect the edges in the picture, then fit the perfect shape of a correct capsule to it, then measure the distance of the actual capsule edge from the perfect model. Working in my little AI corner of shape-checking dishwasher capsules, I was using different techniques from my colleagues working on reading barcodes.

This type of programming is still very much possible and in use. But checking the company’s website today, the product now prominently features capabilities based on neural networks. These allow checking for defects without building specialized algorithms each time. The neural network can simply learn from the given examples.

🏞️ Image recognition (ImageNet)

Image recognition is a classic example of something that people can do without much thinking, but computers have been struggling with for decades.

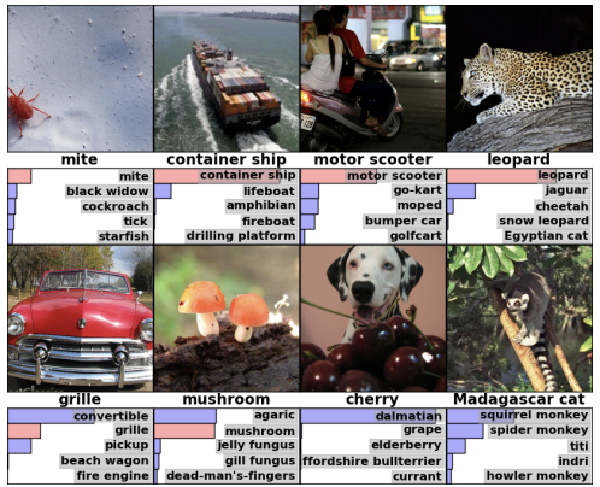

The most prestigious competition in image recognition is Stanford ImageNet . Until 2011, the winning solutions tended to use a technique called support vector machines.

In the support vector machines approach, the algorithm is trained on a dataset of already labeled images. Each image is first transformed into a vector of scalar features (for examples, the number of right angles in the picture, the contrast ratio, etc.). Then, we compute the optimal way of separating different categories of vectors that match the different labels. (that is, we try to learn the difference between vectors representing the photos of cats from those representing photos of dogs, etc.)

Support vector machines used to be very popular in vision applications. The main area of innovation was in how to extract features from each image. The winning 2011 paper only devotes one sentence to SVMs: As for learning, we employ linear SVMs and train them using Stochastic Gradient Descent. The rest of the paper is all about features.

The breakthrough 2012 ImageNet paper

One year later (2012), a team at University of Toronto won ImageNet using a solution that did not use SVMs. Instead, they demonstrated a successful application of neural networks.

♟️ Games

Back in 70s, playing chess at expert level used to be thought of as the pinnacle of what artificial intelligence could achieve in the future.

In May 1997 IBM Deep Blue defeated the world chess champion Garry Kasparov in a 6-game match of chess. (A depressing day for humankind, as per a Guardian article.)

Deep Blue was a chess program, with the rules of the game built-in. It included a database of how to play the opening and the optimal endgame tactics. In the middle game, it evaluated 200 million positions per second to find which move is optimal. To guide the search, it used a sophisticated function designed by chess experts that assigned a score to teach chess position, evaluating it’s strength.

In contrast, when in March 2016 AlphaGo defeated a top Go player Lee Sedol, it used a very different approach. Rather than relying on expert knowledge built-in in the program to evaluate positions, it used deep neural networks trained on a dataset of go games. It also used another neural network to suggest best moves for each position.

Breakthroughs

First neural networks were proposed and studied in the 40s and 50s. And yet all of the adoption milestones mentioned above happened over the last 15 years. What made the neural networks so effective, so suddenly?

Backpropagation

The first breakthrough was theoretical. In the 1986 Nature paper Rumelhart, Hinton & Williams introduced the idea of using backpropagation to train neural networks. In this approach, as we’re training the neural network on examples, we backtrack through the network each time, adjusting the weights of all neurons involved in producing each results. This enabled more effective training of increasingly deep networks.

GPUs

GPU chip (🤖 Midjourney)

Graphical Processing Unit (GPU) is a type of computer processor. In contrast with the standard CPU (central processing units), GPUs can perform simpler operations, but can execute more of them at the same time.

The University of Toronto 2012 ImageNet paper features a notable mention: To make training faster, we used (..) a very efficient GPU implementation of the convolution operation.

GPUs were originally created to render computer graphics. The team at University of Toronto repurposed the GPU, so that instead of computing pixel colors, it trained a neural network. This implementation was highly efficient (in terms of speed and electricity use), allowing the team to train a much bigger model than their budget would allow with a CPU implementation.

Conclusion

The theoretical breakthroughs in neural network design, coupled with the use of accellerated hardware to train them, enabled the spectacular growth of deep learning that we see today.

While in the past each field of artificial intelligence (image classification, industrial quality inspection, playing games, etc.) had their own specialized tools, today we’re using neural networks in all of them.

This is important not just as a proof of versatility and viability of neural networks. It also explains the rapid acceleration of AI development in the last decade: for the first time the entire field shares similar methods and technological stack, meaning that breakthroughs developed in the context of one application, now can immediatelt benefit many other applications.